Will’s position on the role of normative arguments in the debate is unclear. He seems to think that they play some role, but what exactly? If the “conceptual” argument for originalism is strong, are the normative issues irrelevant? Are they some kind of tie-breaker? I would like to know more.

Let us turn to the conceptual argument. Will likes Alexander’s and Lawson’s argument that courts are supposed to enforce the Constitution, and so they need to interpret the Constitution so that they know what they are trying to enforce, and interpreting the Constitution means figuring out what the original understanding was. But this is merely a semantic argument. Alexander, Lawson, and Will just define “Constitution” to mean “the text” rather than the set of norms that structure and restrict the government. That’s like saying that antitrust law is the Sherman Act rather than the body of norms that courts have created under the authority of that Act. This statement is either plainly wrong or based on idiosyncratic definitions.

Steve Sachs’ argument is more sophisticated. Sachs is a positivist and he believes that, as a purely empirical matter, we Americans believe that our constitutional law consists of the original understanding, and any legal norms that appear to deviate it are invalid unless they can be derived from continuity rules that existed at the founding. If that’s what we believe, that’s the law, and if the justices have a duty to obey the law, then they should be originalists.

Sachs does not actually cite any evidence about Americans’ beliefs, and for this reason stops short of claiming that originalism is right. Will does think that such evidence exists. Yet Americans seem to think that they have constitutional rights that protect all sorts of things that are not part of the original understanding. Will thinks that if forced to confront these inconsistencies, people would choose the original understanding over their favorite rights, just as people accept legal judgments about statutes and common law that turn out to violate strongly held moral intuitions about what the law is or should be. My view is that people continue to accept the authority of the Constitution and the Supreme Court precisely because the Court has recognized popular rights. In Sachs’ terms, our “continuity rule” recognizes the power of the Supreme Court to effectuate “amendments” to the text under certain conditions. I would add that it recognizes the authority of the other branches to do so as well.

If Will’s position has any force, it derives from the fact that the public does seem to venerate the 1789 text and the founding generation; and, moreover, that Supreme Court justices do not openly acknowledge that they have the power to amend the text on their own. I have two responses:

First, there is an important ceremonial aspect to our political and legal culture. Common law judges also say that they “find” law rather than “make” law, even though all sophisticated observers know that the opposite is true. Justice Roberts says that he calls balls and strikes, and again no sophisticated person believes him. When Justice Breyer says that he enforces the “spirit” of the 1789 text rather than that he makes pragmatic judgments or enforces precedents (though he says that also), he is giving a ceremonial bow to the founders, and not committing himself to the original understanding. (The ceremony is a strong, persistent, but strange part of our political culture, and is temporarily suspended when we remember that many founders were slave owners but otherwise remains in full force.)

Second, I think Will gets our legal culture wrong. Originalism is a minority position supported by only two justices on the Supreme Court who practice it inconsistently, and hardly any others throughout our entire history. Continuity-to-the-last-generally-accepted-change-in-constitutional-norms is not the same thing as continuity-to-the-founding. Numerous justices and judges–Breyer is just one–have criticized originalism in the clearest of terms and have suffered no adverse consequences, no blast of public outrage of the sort that would occur if a justice said (to use Sachs’ examples) that we are bound by the French constitution or Klingon law or the Articles of Confederation. When President Obama said that he wanted an “empathetic” Supreme Court justice, everyone understood what he meant, and while plenty of people criticized him, his two choices have been confirmed.

My last point is if we really think that the case for originalism is empirical (I have my doubts, but for another time), then there must be an empirical way to test it. There are all kinds of confounding problems–who is the relevant audience, for example, and how much do they need to know, and how large does a consensus have to be. But a simple starting point is a survey question that forced the respondent to choose between an originalist outcome and a popular one. Here’s one.

In the course of searching a person’s home pursuant to a valid warrant, the police discover that the person owns birth control pills. The legislature of the state in which the search took place has recently passed a law making it a criminal offense to own birth control pills. This statute conflicts with Supreme Court precedent; however, the precedent itself is inconsistent with the original understanding of the Constitution in 1789, which does not mention contraception. Should the police arrest the owner of the birth control pills based on probable cause that she violated the statute? Should she be tried and convicted?

I realize that some originalists believe that precedent matters. But under the continuity version of originalism described by Sachs, this seems like a straightforward test case. Or if not, I’d be pleased to hear a better one.

The implosion of Mt. Gox exposes a paradox about bitcoin, which I have been groping for in some writings. Assume that the bitcoin software works perfectly (though there is some question about this) or can be made to work perfectly (as advocates argue). Bitcoin still has a problem with the “joints”–the gap between the network itself and the ordinary (non-expert) users without which it could never be more than a marginal phenomenon. Ordinary people will need to rely on institutions–exchanges like Mt. Gox and other services–and they will not rely on them unless they can trust them. But, unlike bitcoin itself, these institutions are run by human beings who can make mistakes or engage in fraud. Hence the need for regulation. Thus, bitcoin will prosper only if it is integrated into the regulatory infrastructure, but that means that it cannot operate as a decentralized currency outside of government control. Yet it is that feature that makes bitcoin so attractive to its most ardent supporters. I expect that legitimate investors and merchants who may benefit from it will push the government to normalize bitcoin by regulating the intermediary bitcoin institutions, at which point it will no longer be an autonomous currency but just a useful piece of software.

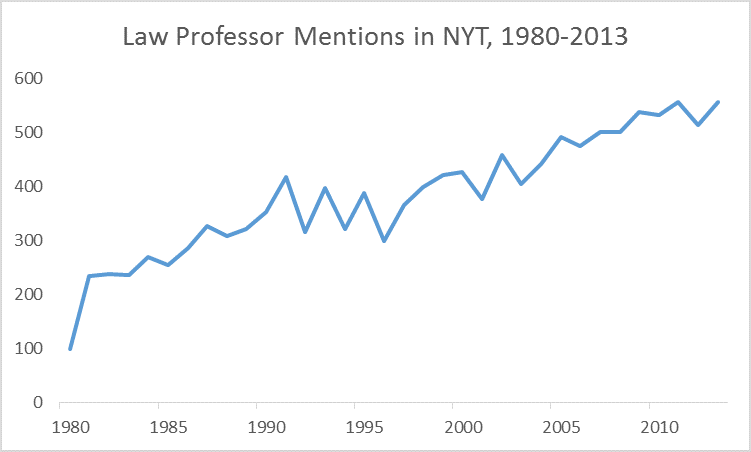

The implosion of Mt. Gox exposes a paradox about bitcoin, which I have been groping for in some writings. Assume that the bitcoin software works perfectly (though there is some question about this) or can be made to work perfectly (as advocates argue). Bitcoin still has a problem with the “joints”–the gap between the network itself and the ordinary (non-expert) users without which it could never be more than a marginal phenomenon. Ordinary people will need to rely on institutions–exchanges like Mt. Gox and other services–and they will not rely on them unless they can trust them. But, unlike bitcoin itself, these institutions are run by human beings who can make mistakes or engage in fraud. Hence the need for regulation. Thus, bitcoin will prosper only if it is integrated into the regulatory infrastructure, but that means that it cannot operate as a decentralized currency outside of government control. Yet it is that feature that makes bitcoin so attractive to its most ardent supporters. I expect that legitimate investors and merchants who may benefit from it will push the government to normalize bitcoin by regulating the intermediary bitcoin institutions, at which point it will no longer be an autonomous currency but just a useful piece of software. Some revolutions take place with a bang. The empirical revolution in legal studies–and by this I mean rigorous data analysis–was hardly perceptible at first but now empirical work is everywhere. Much of the most interesting work being done right now in the legal academy–in such diverse fields as civil procedure, bankruptcy, international law, and constitutional law– reflects the rigorous statistical methods that Ted championed. At least 5 members of my faculty are trained in statistical methods, and several others do statistical work via collaborations. Twenty years ago hardly anyone did. Ted provided important institutional support for empirical legal scholarship but, most important, served as a model for those who followed him. I’m not sure he received sufficient recognition for his important methodological contributions to legal scholarship in his lifetime. Our paths crossed only a few times but each time it was terrifically rewarding for me. He will be sorely missed.

Some revolutions take place with a bang. The empirical revolution in legal studies–and by this I mean rigorous data analysis–was hardly perceptible at first but now empirical work is everywhere. Much of the most interesting work being done right now in the legal academy–in such diverse fields as civil procedure, bankruptcy, international law, and constitutional law– reflects the rigorous statistical methods that Ted championed. At least 5 members of my faculty are trained in statistical methods, and several others do statistical work via collaborations. Twenty years ago hardly anyone did. Ted provided important institutional support for empirical legal scholarship but, most important, served as a model for those who followed him. I’m not sure he received sufficient recognition for his important methodological contributions to legal scholarship in his lifetime. Our paths crossed only a few times but each time it was terrifically rewarding for me. He will be sorely missed. Source:

Source:  Jeffrey Gordon has posted a

Jeffrey Gordon has posted a

We read papers by Bruce Ackerman, David Strauss, and Jeremy Waldron. I was familiar with this work, but rereading these articles after the originalism pieces, it was easier to appreciate Ackerman’s argument that common-law constitutionalism doesn’t come to terms with the role of popular sovereignty in American political culture. Who ever talks about the common law anymore? Or of great common-law judges? But then Ackerman’s “originalism,” according to which public deliberation takes the place of the Article V process, founders on ambiguity as to what counts as an amendment. I tend to think that the justices implement their ideological preferences subject to some real but hard-to-specify institutional constraints about which they are (sometimes) willing to hear argument, above all precedent. If that is common-law constitutionalism, I suppose I’m on board.

We read papers by Bruce Ackerman, David Strauss, and Jeremy Waldron. I was familiar with this work, but rereading these articles after the originalism pieces, it was easier to appreciate Ackerman’s argument that common-law constitutionalism doesn’t come to terms with the role of popular sovereignty in American political culture. Who ever talks about the common law anymore? Or of great common-law judges? But then Ackerman’s “originalism,” according to which public deliberation takes the place of the Article V process, founders on ambiguity as to what counts as an amendment. I tend to think that the justices implement their ideological preferences subject to some real but hard-to-specify institutional constraints about which they are (sometimes) willing to hear argument, above all precedent. If that is common-law constitutionalism, I suppose I’m on board.